I have two ideas to simulate the process of attaching ribbon in Maker Faire and to link it to the data visualization. One is using AR and another one is using AR (They are essentially the same actually.)

1. Using Hololens to enhance the visualization.

The most powerful functionality of Hololens is spatial mapping which could scan the user's current environment then use such spatial information to locate the argument model. My idea is to create a virtual fence and let users put it on the wall around them via the spatial mapping. Then users could use the hand-tracking technique provided by Hololens to grab the different ribbons and put them on the fence in air. After the user makes some changes to the ribbons on the virtual fence on the wall, the data represented on the website (bar chart) will also change according to it. I believe such technique is definitely more intuitional than dragging the slider to change the ribbons.

The sketch of having the AR fence around the user while browsing the website. Sorry, I am really not good at drawing...

2. Using the 360 video to enhance the visualization.

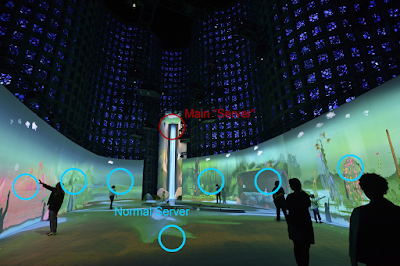

As a developer for both AR/VR, compared with Hololens and other VR headset, I do not think that there is any major difference between the two technology in the terms of people's immersive experience (though they do have many many differences in developing). For using VR to enhance the data visualization of the original website, instead of putting the fence on the wall near to the user, I will give the user the "on-site experience" by bringing them to the Maker Faire in 360 video (It is just a though and impossible to be implemented since they didn't do 360 video recording of the event.) After wearing the VR headset, in the 360 video, the user could watch the procedure of participants attaching the ribbon to the fence. And the bar chart will also appear in the VR environment and change in real time when people attach the ribbon.

Conclusion

I have to admit that under most occasions, using VR/AR in data visualization is only for the sake of using the new technology which is an unavoidable procedure for nearly all technology to step into the massive popularization stage. And after the Phase 1 in which I made an AR data visualization while did some research in this field, I found that VR/AR which is intended to bring a more immersive and realistic experience to people at the price of lowering the operability is not suitable for most type of data visualization but, on the contrary, is more suitable for simulating the process of the data being created [Bryson 1996].

Reference

Bryson, Steve. "Virtual reality in scientific visualization." Communications of the ACM 39.5 (1996): 62-71.